How Would You Improve Spotify's Taste Profile?

The product sense question every AI PM candidate fumbles, and the framework that separates offer-worthy answers from generic ones

Dear readers,

Thank you for being part of our growing community. Here’s what’s new this today,

AI Product Management:

How Would You Improve Spotify’s Taste Profile?

Note: This post is for our Paid Subscribers, If you haven’t subscribed yet,

This question is not a test of your knowledge of Spotify. Interviewers who ask it are evaluating whether you understand the core design tension in modern AI personalisation: the gap between what a system infers about a user and what the user actually wants the system to know. That gap is not a bug in the current generation of recommendation engines. It is the defining product problem.

Spotify’s original taste profile operated entirely on implicit signals: skips, replays, saves, playlist additions, and time-of-day listening patterns. The system was opaque by design, and Spotify’s product org treated opacity as a feature rather than a liability. If users could not see or override the model, they could not break it. Then something changed. User research and external commentary, including a widely-cited 2022 piece in The Verge on algorithmic fatigue, documented that heavy Spotify users were experiencing what engineers internally called ‘personalisation rot’: the longer you used the app, the more the algorithm doubled down on your past behavior rather than expanding your taste. A user who played Taylor Swift during a breakup found herself trapped in a sad-pop feedback loop for months.

What Spotify did next is the real subject of this interview question. They launched explicit controls, including artist bans, mood sliders, and the AI DJ feature that blends algorithmic picks with editorial context. These controls introduced a new design contract: the user can now tell the system things the system cannot infer. Your interviewer wants to know whether you understand why that matters, and whether you can reason about what to build next given that shift. Candidates who treat this as a feature brainstorm miss the entire point.

Where Most Candidates Fail

The three failure modes in answering this question are so consistent that interviewers at AI companies have started using them as negative signals with their own shorthand. The first is what hiring teams call ‘feature soup’: the candidate lists five to eight potential improvements with no prioritization logic and no connection to a coherent user problem. ‘I would add a mood selector, improve the algorithm with more data, integrate social listening, build a better onboarding flow, and add concert recommendations.’ Every item might be reasonable in isolation. Presented together without a thesis, they signal that the candidate cannot think in systems.

The second failure mode is ignoring the explicit controls that already exist. Candidates who spend three minutes pitching artist-blocking as their headline idea reveal that they did not research the product before walking in. Spotify shipped granular taste controls across its iOS and Android apps starting in 2023. Proposing something that already launched is not just embarrassing; it signals you do not do competitive research as a habit, which is disqualifying at the senior level.

The third and most subtle failure is what might be called ‘control theater’: the candidate acknowledges the explicit controls but treats them as the solution rather than as the beginning of a harder problem. Saying ‘Spotify should give users more control over their recommendations’ is a safe, popular answer that earns no credit in an AI-PM interview. The actual hard question is: when should the AI override what the user declared, and when should it defer? A user who bans all rap music but saves a Kendrick Lamar track creates a direct conflict. What does the system do? Candidates who cannot engage with that tension have not actually thought about AI personalization at the product level.

AI Product Management: How Would You Improve Spotify’s Taste Profile?

This is one of the most commonly asked product improvement questions at companies like Spotify, Apple Music, YouTube Music, JioSaavn, Gaana, and any PM role where recommendation systems, generative AI, or user trust are part of the product surface. Let us walk through how a strong candidate would answer this in a real interview.

Interview Tip: This question tests whether you understand the core tension in modern AI personalization: the gap between what a system infers about a user and what the user actually wants the system to know. Do not treat this as a feature brainstorm. Treat it as a systems-design question.

Step 1: Ask Clarifying Questions

Before jumping into solutions, I want to make sure I understand the scope of this question.

Q: When you say “Taste Profile,” are we talking about the underlying recommendation model, or the user-facing controls that let listeners shape their recommendations?

Let us assume we are looking at both: how the system understands user taste, and how the user can see and shape that understanding.

Q: What platform are we focused on? Mobile, desktop, smart speakers, car?

Let us focus primarily on the mobile app, since that is where most listening happens.

Q: Are we optimizing for engagement, retention, discovery, or some combination?

Let us say our north-star is long-term retention through better discovery, not just session time.

Q: Which user segment should we prioritize? New users, casual listeners, or power users?

Let us focus on power users (those with 2+ years on the platform), since they are most likely to experience personalization fatigue.

Q: Should I consider the recently launched features like track exclusions (October 2025) and the new Taste Profile editor announced at SXSW (March 2026)?

Yes. The interviewer expects you to be current. Build on top of what exists.

Interview Tip: That last clarifying question is critical. If you pitch “let users exclude tracks from their taste profile” as your headline idea, you reveal that you did not research the product. Spotify shipped that feature globally in October 2025, and announced the full Taste Profile editor in beta in March 2026. Always do your product research before the interview.

Step 2: Understand the Current Product Landscape

Before proposing improvements, let me quickly map what Spotify already offers in this space as of early 2026:

Implicit signals the system already uses: Skips, replays, saves, playlist additions, time-of-day listening patterns, device context, session duration, and time-to-skip.

Explicit controls users already have:

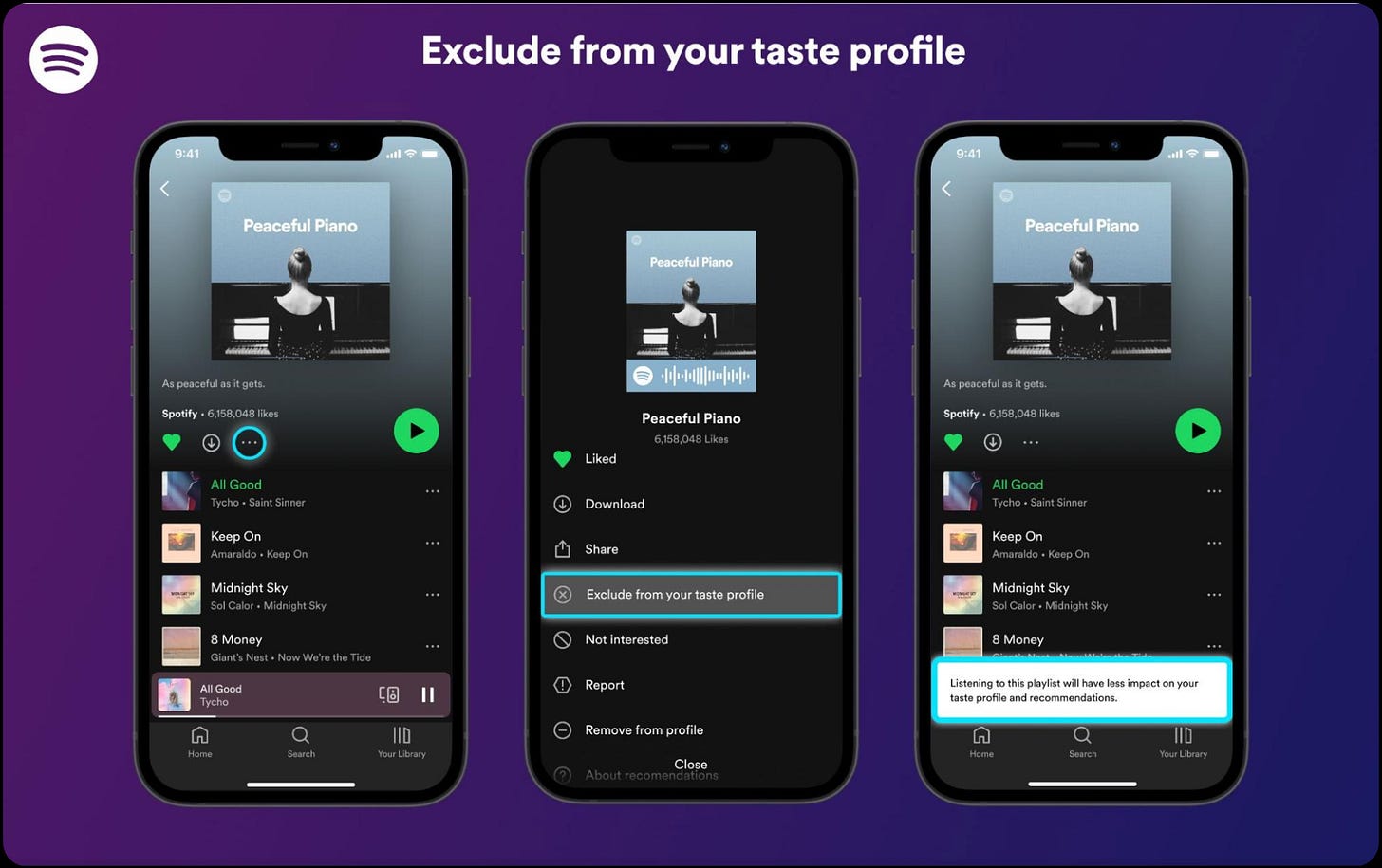

Exclude specific tracks from Taste Profile (launched October 2025, available globally for Free and Premium users). Exclude playlists from Taste Profile (launched earlier). Block specific artists from appearing in algorithmic playlists. Genre selection in Discover Weekly (refreshed in 2025 with up to five genre options). AI DJ with voice and text requests (English and Spanish, 60+ markets). Prompted Playlist for natural-language playlist creation. The new Taste Profile editor (announced March 13, 2026 at SXSW, beta in New Zealand) that lets users see how Spotify understands their taste and provide natural-language feedback.

The key insight: Spotify has been moving from a model where personalization is something done to users, toward one where personalization is a negotiation between user intent and machine inference. This is the philosophical shift your answer needs to build on, not repeat.

Step 3: Identify User Pain Points

Even with all these controls, power users still experience three core pain points:

1: Personalisation Rot (Feedback Loop Traps)

The longer you use Spotify, the more the algorithm doubles down on your past behavior rather than expanding your taste. A user who played a lot of sad indie during a difficult month finds herself trapped in that mood for weeks afterward. Research on algorithmic fatigue confirms this is a real and growing problem across recommendation platforms. Spotify’s own data shows that user-driven listening tends to be more diverse than algorithmic listening, and that users who become more diverse listeners are less likely to churn.

2: Context Collapse

The Taste Profile treats all listening as equal signal. But a user playing nursery rhymes for their toddler, sleep sounds at night, and workout music at the gym are three completely different contexts. Even with track exclusions, the burden falls entirely on the user to manually exclude every off-taste track. This is especially painful in shared-device situations: family smart speakers, CarPlay sessions where a teenager takes over, or shared accounts.

3: The Conflict Gap

When a user’s declared preferences conflict with their observed behavior, the system has no way to surface or resolve that conflict. A user who blocked all EDM but consistently saves high-energy electronic tracks. A user who set a “focus” preference but opens the app during social hours. The system sees the contradiction but has no mechanism to ask the user about it or learn from it.

Interview Tip: Notice how each pain point connects to a specific gap in the current system, not a missing feature. This is the difference between “product sense” and “feature brainstorming.” Strong candidates diagnose system-level problems. Weak candidates list feature ideas.

Step 4: Propose Solutions Using the Trust Layer Framework

I will organize my solutions using what I call the Trust Layer framework, which maps to three different relationships between the user and the AI system.